The ruling comes to know about the implementation of opaque models.

The ruling comes to know about the implementation of opaque models.

The ruling comes to know about the implementation of opaque models.

A court in the Netherlands has handed down a ruling declaring the use of an algorithm designed to combat Social Security fraud contrary to the European Convention on Human Rights, and therefore illegal.

The Netherlands Legal Committee for Human Rights together with consumer associations and two private citizens had denounced the Dutch state for the use of an algorithmic system of risk indication (SysRI) that is used to predict the probability of that applicants for state benefits defraud both their contributions to Social Security and the payment of taxes.

The regulation defined the SyRI as a technical infrastructure that allows anonymous linking and analysis in a secure environment in order to generate risk reports.

The Judgment does not share the arguments of the Dutch State, and establishes that there is a special responsibility in the use of new technologies, ruling that the SyRI fails to comply with article 8 of the Human Rights Convention, the Right to respect for private and family life. It refers to article 8.2 and interprets that this precept requires an adequate balance between the measures implemented by the State and the advantages associated with the use of these technologies, and the interference that such use may cause with the right to respect for private life.

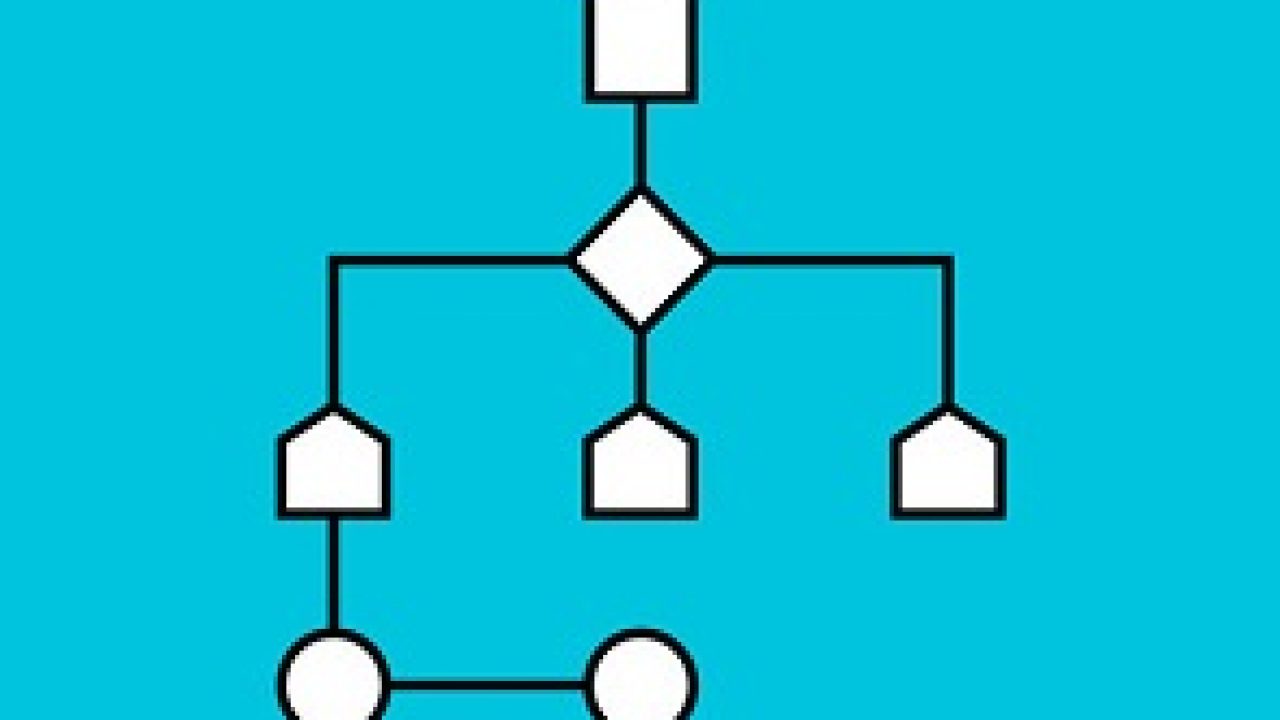

Lack of transparency of black box systems.

Transparency is at the heart of the debate on the use of algorithms, especially in its use by public administration. The US has regulated its use by Federal Agencies, and the EU Commission has established transparency as an essential requirement to develop artificial intelligence. It must be possible to reconstruct how and why the algorithm behaves in a certain way, and those who interact with the artificial intelligence must know it, and also know which people are responsible for it.

SyRI is a black box-trained algorithm, since it does not refer to scenarios where it is not possible to identify the reasons that have led to making certain decisions.

The use of black box technology clashes head-on with the regulations that demand transparency, control, traceability and the possibility of identifying the decision-making processes of the algorithms.

At the private level, it will be even more difficult, as it will be demanding transparency from the operators.

Perpetuation of discriminatory patterns.

The perpetuation and amplification of social prejudices based on historical data on health, prison justice, tax or labor investigations, becomes a cause for concern.

Guarantees about the neutrality of the algorithms.

The US Algorithmic Responsibility Law requires an audit with three levels of analysis: the design of the algorithm, the data used to train it, and the results obtained. The reason is that their use can undermine the functioning of the free market, harm consumers, and deny historically disadvantaged or vulnerable groups full protection of their rights and freedoms.

Protection of Human Rights.

The Judgment uses the violation of the Right to private life as a legal basis to establish the illegality of SyRI, and to limit the use of certain technologies, opening a new line of argument for future cases.

The debate returns on the fit of new technologies such as artificial intelligence, Smart Contracts or Blockhain in the existing legal framework, and on the need to implement new specific regulations, with which to address the particular problems that have arisen within society digital.

__________________________________________________

It is an indisputable fact that we use Artificial Intelligence in smartphones or in Google, that we receive recommendations for music, books or news, that personal assistants listen to us, understand and help us, AI is also used in medical diagnoses, selection processes, or for the granting of credits.

It is one of the strategic disciplines to be more competitive and address challenges such as the climate emergency or the aging of the population. It also has its danger zones when generating situations of asymmetry, whoever has access to the data and the ability to do something with it has the power. And right now very few have it. That is why it is necessary to balance the balance to ensure that development is inclusive.

It also allows the AI to generate content that is not truthful, but indistinguishable from truthful. And systems are not invulnerable, they can be hacked and tricked.

Some countries, China and the US, have realized that whoever dominates artificial intelligence will dominate the world and have decided to invest ambitiously. Europe is trying to reduce the funding gap by preserving its values: non-discrimination, inclusion, transparency, human rights, privacy, etc.

Since not all technological development is progress, society must determine what development it wants to improve the quality of life of people and the entire planet. There is not enough debate on this issue, mainly because there is great ignorance, and it is already known that whoever has the knowledge has the power.

This huge amount of data that we call big data is very valuable to help us make fairer decisions. Human decisions are susceptible to corruption, conflicts of interest, etc. If we overcome these limitations we will have a great opportunity in economic development, public health, transportation, education...

Concepts such as justice, the attribution of responsibility, veracity, diversity or reliability, have a mathematical translation, formulations that are incorporated into algorithms. The citizen has the right to know the conversion parameters.

The responsibility of each citizen to learn is very great. Society must mobilize and decide what development it wants. That is why education is so important, using technology is very different from understanding how it works. Knowledge of computational thinking is as important in the 21st century as knowing how to read or math. We must learn to use technology as a tool to solve problems. The fourth industrial revolution will generate hundreds of thousands of jobs that will not be covered because there are not enough trained people.